In the comments section of the article “Your Crowdsourced Mind” (which I recommend you read to get the most out of this article), a reader named K asked:

If everyone has a so-called crowdsourced mind, then what were the first sapient beings in the universe like, when there were no spirits to influence them yet?

Also if I have a so-called crowdsourced mind, does that mean that the way I think and the views I hold likely are not unique, even if I may feel like an odd-out freak?

This article is an edited and slightly expanded version of my reply. You can see my original response here.

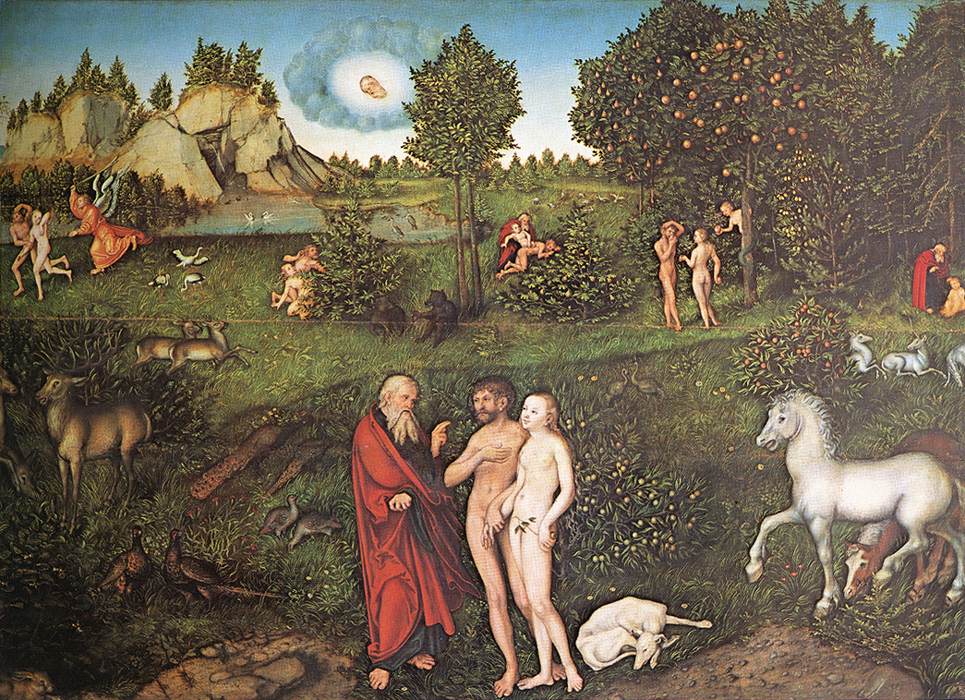

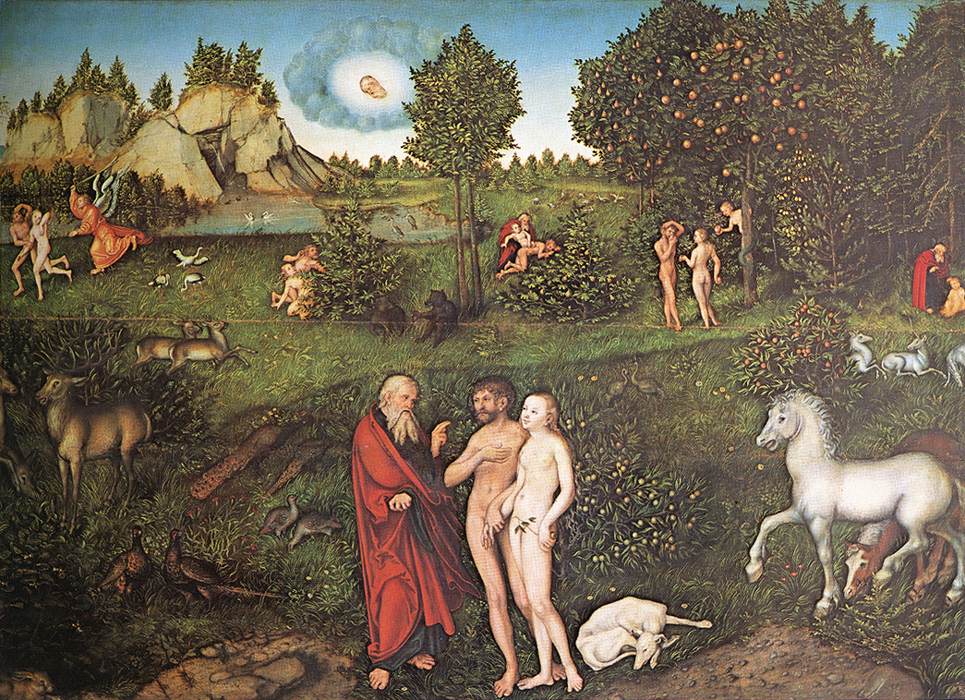

The picture painted by the earliest chapters of the Bible is that the earliest humans on earth had God as their companion and source. God walked with them in the garden (see Genesis 3:8). Ultimately, God is where everything comes from. If there were no other humans, angels, or spirits for the love and wisdom from God to flow through on its way to us, it would flow into us directly from God.

Adam and Eve, by Lucas Cranach

But even in that case, God’s love and wisdom flows through the higher layers of the spiritual universe on its way to us. God created all the levels of the spiritual universe first, before the material world and its levels, not so much in time as in order and priority. Creation flows from the inside out through all the layers of the universe, which form something like a series of concentric circles surrounding its center, which is God. Since we humans are finite, not infinite, and created, not divine, we can’t receive anything directly from God, any more than we receive sunlight directly from the sun. It must first flow through the spiritual distance between us and God.

Now that Jesus has been born and glorified, we can receive thoughts and feelings directly and personally from God via the “Son” and the “Holy Spirit,” which are the human presence and power of God. But that’s a different topic altogether. And the earliest humans lived long before Jesus was born, so for the purposes of this article we’ll set that aside. For the real meaning of the Father, Son, and Holy Spirit, please see: “Who is God? Who is Jesus Christ? What about that Holy Spirit?”

Besides, the people represented by “Adam” in the Bible were not the first spiritually aware people on earth.

For more on early humans and odd-out freaks, please click here to read on.

What do the Ten Commandments, psychics, the CIA, and social media have in common?

What do the Ten Commandments, psychics, the CIA, and social media have in common?

“The Spirit of the Lord is on me, because he has anointed me to preach good news to the poor. He has sent me to proclaim freedom for the prisoners and recovery of sight for the blind, to release the oppressed, to proclaim the year of the Lord’s favor.” (Luke 4:18–19, quoted from Isaiah 61:1–2)

“The Spirit of the Lord is on me, because he has anointed me to preach good news to the poor. He has sent me to proclaim freedom for the prisoners and recovery of sight for the blind, to release the oppressed, to proclaim the year of the Lord’s favor.” (Luke 4:18–19, quoted from Isaiah 61:1–2)